Commitment to AI Safety

Core Mission

IAISS advances responsible AI by tackling safety in agentic and embodied systems, such as robots that act autonomously in real-world environments. It emphasizes risk mitigation to prevent harm while maximizing societal benefits.

Key efforts target AI alignment, where systems behave as intended without unintended consequences, especially in physical or decision-making contexts. This includes studying world models for safer predictions in robotics and autonomous driving.

IAISS delivers recommendations to governments for binding regulations, audits, and international standards to enforce accountability. Collaboration with bodies like AI Safety Institutes promotes unified global frameworks.

Research Focus

Policy Advocacy

The Institute for AI Safety and Strategy (IAISS) is a non-profit organization that focuses on promoting safe, ethical AI development through research, policy, and collaboration. Its principles align closely with global efforts in AI governance.

These efforts aim to foster innovation while ensuring that artificial intelligence technologies are aligned with human values and societal needs, ultimately leading to a more equitable and harmonious world where technology enhances our lives rather than complicates them.

Resources and training target policymakers, industry, and researchers to build AI literacy and ethical practices. This fosters equitable deployment upholding human rights.

Educational Outreach

Ethical Commitment

Global Collaboration

IAISS prioritizes human rights and equitable values in all AI initiatives. IAISS ensures technologies promote societal good by embedding fairness and inclusivity from design through deployment. This safeguards against biases and disparities while fostering trust among diverse stakeholders.

By partnering with intergovernmental groups, IAISS drives standards for risk management and incident reporting across borders. Long-term foresight integrates safety into policymaking for sustainable AI benefits.

Multi-domain forecasting framework for key aspects of AI development through 2030, focusing on automation, labor markets, AI capabilities, and geopolitical influences.

Crucial insights into the complex interplay of AI technology, economic impacts, and international cooperation, underscoring the need for proactive governance in the face of rapid AI advancements.

Policy considerations and recommendations in suggesting global coordination to dramatically reduce catastrophic AI risks.

Strategic isolation of key intervention windows for effective governance of autonomous systems and coordination actionable policy to mitigate the economic impacts:

Re-skilling and training programs,

Progressive taxation on AI-generated profits

AI (Catastrophe) Forecasting

Try it here:

AI Strategy

3-part course series:

"Responsible AI: Generative AI Use in The Kingdom’s Healthcare System."

Designed for clinicians and healthcare leaders, this series aligns with Vision 2030 and the Health Sector Transformation Program (HSTP). It bridges innovation, ethics, and regulation to position Saudi Arabia as a global leader in governed AI-enabled care.

AI serves as a clinical co-pilot but demands governance, data residency, and privacy safeguards. This series empowers professionals for sovereign, ethical, patient-centered AI leadership.

Covers generative AI from clinician and patient perspectives. Explores the “Triad of Governance” (MoH, SDAIA, NHIC) and 5-layer validation for AI outputs. Teaches safe prompting respecting Saudi cultural and clinical constraints.

Part 1: Foundation & Safe Practice

Part 2: Ethics, Law & Professional Pledge

Part 3: Technical Mastery & Future Readiness

Dives into Personal Data Protection Law (PDPL) implications for health data. Integrates bioethical principles (Amanah, Adl, Sitr) into workflows. Emphasizes human judgment superseding automation.

Addresses Sovereign AI deployment and zero-retention architectures for data protection. Guides scaling from pilots to institutional excellence. Prepares for evolving Saudi AI policy landscapes.

Teaching

Forecasting sophisticated, multi-staged cybersecurity attacks on critical infrastructure.

Crucial insights into the complex interplay of AI technology and cybersecurity mitigation.

Simulations and recommendations to dramatically reduce risks.

Strategic isolation of key intervention components

Complex AI Cyber Attacks Navigation

Sentinel Nexus

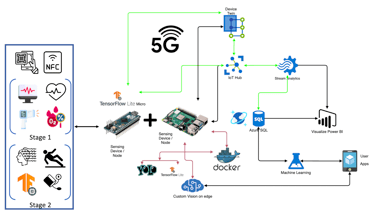

Underlying multimodal architecture for the detection of cyber breaches into systems

Aurora Core

Comprehensive Entity Search and Analysis

NexusInt

Rapidly Deployable, Secure, End-to-End AI Infrastructure:

MicroDatacenter, AI architecture, Edge compute, AI developed on-prem

AI Solutions

Innovative technologies for national research security and critical infrastructure.

AI-Driven Security

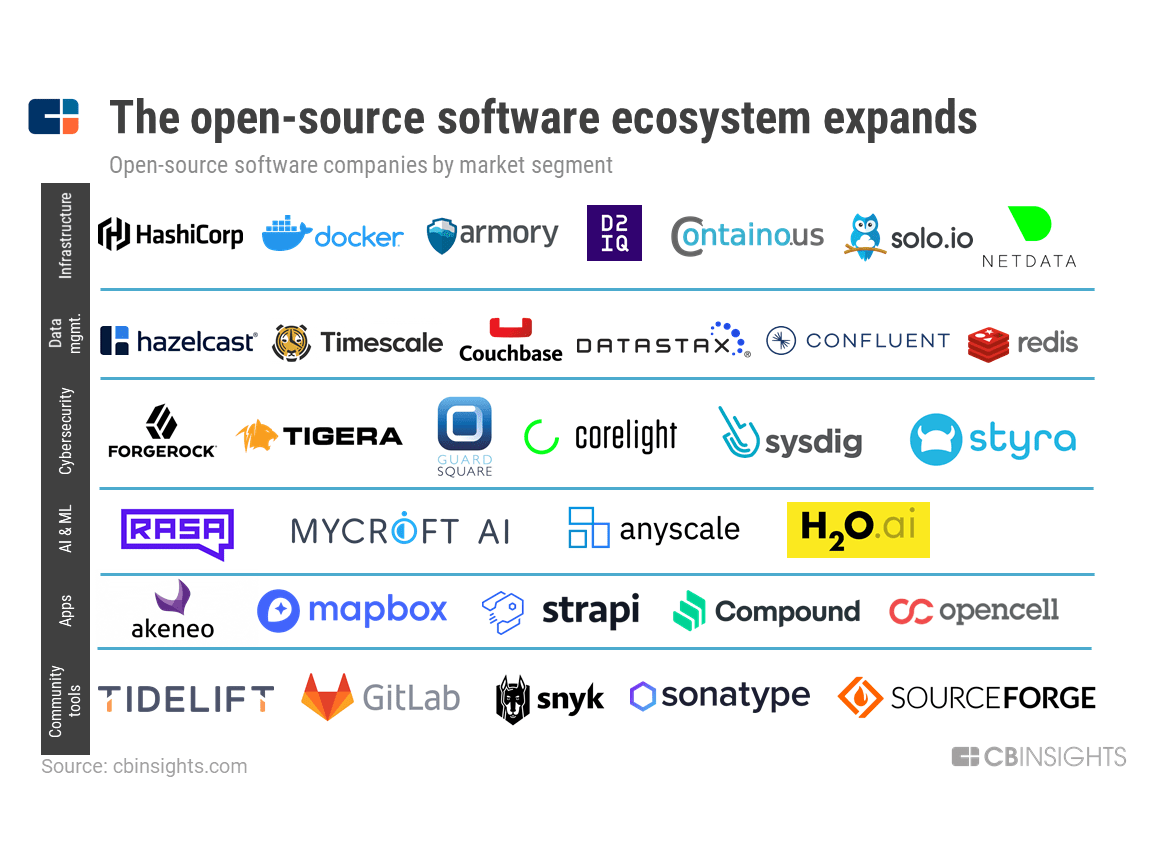

Harnessing open-source models and advanced architecture for secure systems tailored to government needs.

OSINT Expertise

Delivering privacy-preserving solutions that empower intelligence gathering while upholding security standards.

Contact Us

Get in touch for inquiries about our research on AI safety, risk mitigation, and strategic policy frameworks.

Location

The Institute for AI Safety and Strategy (IAISS) is based in Canada.

Address

13986 Cambie Rd. Unit 278, Richmond, BC V6V 2K3

Support

info@ai-rd.ca

© 2026. All rights reserved.

Subscribe for Insights

Contact